The Price of Dominance

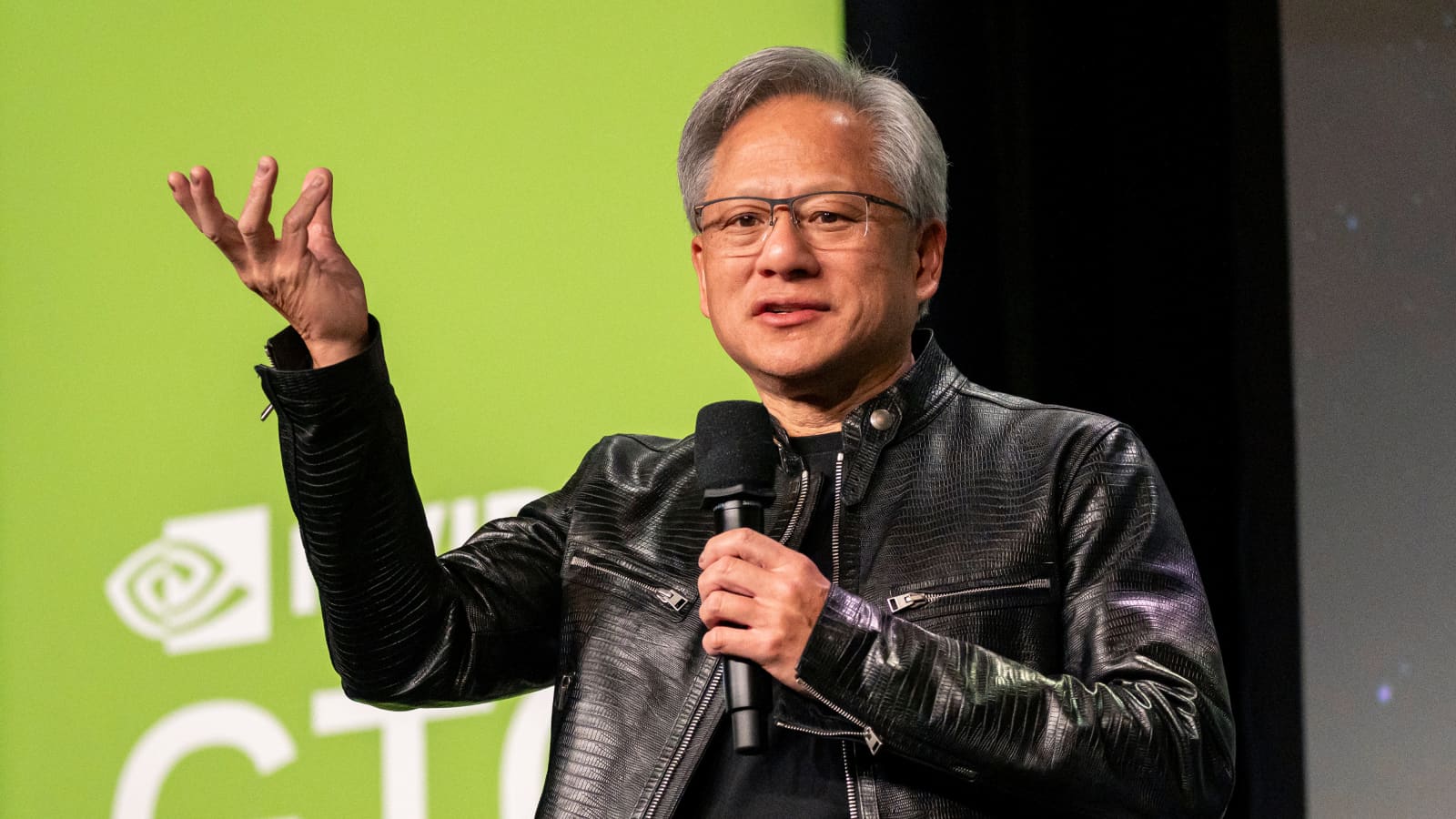

Nvidia gross margins are a target. By pushing the Blackwell architecture into the stratosphere of enterprise pricing, Jensen Huang has done more than enrich shareholders. He has subsidized his own competition. The logic is simple. High prices create a protective umbrella for inefficient or experimental architectures to find a foothold. Cerebras is currently the primary beneficiary of this pricing arrogance.

The tape shows the reality. As of the market close on May 14, Nvidia ($NVDA) continues to trade at a premium that assumes a permanent monopoly on the AI data center. However, the whispers from the floor of the New York Stock Exchange suggest a rotation is brewing. Investors are no longer asking if Nvidia can make the chips. They are asking if the hyperscalers can afford to keep buying them. Per the latest market data from Bloomberg, the cost of compute is now the single largest line item for Tier 1 cloud providers, surpassing even energy and real estate.

The Wafer Scale Insurgency

Cerebras does not play by the rules of traditional lithography. Their Wafer Scale Engine 3 (WSE-3) is a singular piece of silicon the size of a dinner plate. It bypasses the interconnect bottleneck that plagues Nvidia clusters. When you link ten thousand H200s, you lose massive amounts of efficiency to the copper and fiber optics connecting them. Cerebras keeps the data on the silicon. This is not just a technical nuance. It is a fundamental shift in the physics of compute.

Nvidia’s pricing strategy has left a gaping hole in the mid-market. While the Blackwell B200 is a marvel of engineering, its total cost of ownership (TCO) is becoming prohibitive for anyone without a sovereign wealth fund’s balance sheet. Cerebras, once a curiosity for national laboratories, is now being vetted by enterprise giants who are tired of the Nvidia tax. The hardware is only half the battle. The software moat is thinning. With the maturation of PyTorch and Triton, the proprietary advantage of CUDA is no longer the impenetrable fortress it was in 2024.

AI Hardware Margin Trends 2024 to 2026

The SpaceX Liquidity Paradox

SpaceX is the ghost at the IPO feast. For years, the market has salivated over a Starlink spin-off. The narrative was simple. Starlink provides the recurring revenue that the lumpy, capital-intensive launch business lacks. But the reality in May 2026 is more complex. Elon Musk has built a self-sustaining ecosystem that does not need the public markets. In fact, the public markets might be a liability.

Recent secondary market trades value SpaceX at approximately $215 billion. This is a staggering figure that dwarfs most of the S&P 500. According to reporting from Reuters, SpaceX has achieved a level of vertical integration that makes an IPO almost counterproductive. Why deal with the SEC and quarterly earnings calls when you can raise billions in private placements from the world’s most patient capital? The SpaceX IPO isn’t a financial necessity. It is a political tool.

| Metric | Nvidia ($NVDA) | SpaceX (Private) | Cerebras (Pre-IPO) |

|---|---|---|---|

| Market Valuation | $3.2 Trillion | $215 Billion | $8.5 Billion (Est.) |

| Primary Revenue Driver | AI Data Center | Starlink Subscriptions | Wafer Scale Clusters |

| Gross Margin (Q1 2026) | 81.2% | 54% (Starlink) | 59% (Projected) |

| Moat Source | CUDA Software | Launch Reusability | Interconnect Density |

The Interconnect Bottleneck

Bandwidth is the new gold. As models grow to 100 trillion parameters, the time spent moving data between chips is the primary constraint on training speed. Nvidia’s solution is NVLink. It is efficient, but it is expensive. It requires a proprietary stack of switches, cables, and transceivers. This is where the “pricing differently” argument comes into play. If Nvidia had priced its networking gear more aggressively, it could have locked out the competition for a decade.

Instead, they optimized for short-term margin. This opened the door for Ethernet-based alternatives and radical architectures like Cerebras. The WSE-3 eliminates the need for external networking for many workloads. By keeping the entire model on a single wafer, Cerebras achieves a memory bandwidth of 21 petabytes per second. For comparison, an Nvidia H200 cluster requires miles of fiber to reach a fraction of that throughput. The technical debt of the legacy GPU architecture is finally coming due.

The Sovereign Compute Wave

Nations are now buyers. We are seeing a shift from corporate AI to sovereign AI. Countries like Saudi Arabia, Japan, and the United Arab Emirates are building their own sovereign clouds. They are not looking for the best stock to buy. They are looking for the most efficient way to achieve digital autonomy. This shift favors the “appliance” model offered by Cerebras over the “component” model of Nvidia. A sovereign state wants a turnkey data center, not a box of parts and a complex integration contract.

The SEC filings from earlier this month show a surge in interest for non-X86 and non-GPU architectures. Per the latest Nvidia 10-Q, the company acknowledges that competition is intensifying not just from traditional rivals like AMD, but from internal silicon projects at Google, Amazon, and Microsoft. The hyperscalers are tired of being Nvidia’s margin engine. They are building their own exits.

The next data point to watch is the June 12 Federal Reserve meeting. If interest rates remain elevated, the cost of capital for massive AI clusters will force a secondary wave of optimization. Watch the 10-year Treasury yield. If it crosses 4.8%, expect a sharp correction in the AI hardware sector as the “growth at any cost” era officially ends.